Table of Contents

- Energy

- Digitally immersive Simulations of worlds

- Nanotechnology

- Biological manipulation

- Digitally fully immersive Simulated realities

- Space exploration

- Omnimodality

- Digital First, Physical Second

- AGI is ASI

- Superintelligence definition

- Pure digital mind

- Ultimate Computation

- Why I Don’t Focus on Superintelligence

- Omnimodality

- Omnimodal Generation

- Superintelligence R&D Workflow

- Post-Scarcity

1.✅ Pattern Recognition (Today’s neural networks)

1.✅ Pattern Recognition (Today’s neural networks)

2.🧠 Deep Scientific & Causal Reasoning

3.🌌 vast deep high-fidelity casual In silico simulations

4.🧭 highly detailed Long-Term Memory & Self-Consistency Over Time

5.🪞 Meta-Cognition, conciousness & Self-Reflective Alignment

6.⚙️ agentic Autonomous Goal-Driven Agency & temporal concistency

7.🔄 Omni-Modality

8.Sparse Coding, Neural Efficiency & Energy-Aware Representation

9.Dimension – Deep learning (Including One-Shot and Lifelong)

10.Cognitive Dimensional Expansion

11.Neuromodulatory Control & Motivational Dynamics

• 💻 Massive Scalable Compute (as amplifier only)

Superintelligence equation = what happens when every dimension beyond human across all dimensions, meaning it becomes vastly superhuman in all domains.

Superintelligence definition

Artificial Superintelligence (ASI) is a computational system whose general cognitive capabilities vastly exceed those of the most capable human minds by many orders of magnitude across all intellectual domains — including scientific discovery, technological invention, every field of engineering, every scientific discipline, mathematics, the creative arts, strategy and planning, economics, psychiatry, history, psychology, politics, and philosophy.

An ASI is not defined by speed or scale alone. It is defined by its ability to autonomously generate, evaluate, and refine knowledge, models, and solutions that lie beyond the cognitive limits of even the brightest humans. These capabilities derive from learning at superhuman levels of depth, abstraction, fidelity, speed, versatility, efficiency, coherence, and generalization.

This represents a qualitative shift in intelligence — not merely a quantitative improvement — enabling sustained discovery and invention at a level unreachable by any biological mind.

Even conservative estimates imply cognitive iteration speeds thousands of times faster than the fastest human thinkers, while more realistic substrate- and parallelism-based estimates place superhuman AI in the million-to-billion-fold regime. These advantages compound through parallelism, persistence, and recursive self-improvement.

⸻

🚀 Superintelligence = Discovery of the Unknown

Primary Function:

Inventing, unifying, imagining, and executing across all of reality’s frontiers — at scales and depths that exceed human cognition

⸻

No physical law forbids it.

Intelligence is information processing constrained by energy, matter, and time. Nothing in thermodynamics, computation theory, or physics forbids scaling cognition to superhuman levels. It’s just unbelievably complex. Engineering difficulty ≠ impossibility.

Researchers see it the way physicists saw fusion in the 1950s or flight in the 1800s: not impossible, just waiting on the right breakthroughs — in architecture, energy efficiency, memory, and self-organization.

The impossibility crowd confuses today’s limits with permanent ones. They look at GPT-5 and say, “This can’t reach superintelligence,” as if that proves the ceiling. But frontier researchers think in layers — DHRL, RLM, autonomous goal decomposition, meta-cognition — all steps toward generalized cognition, not endpoints.

⸻

Formal Properties of ASI

Superintelligence wouldn’t build cities brick by brick, write novels by hand, or run chemical experiments in a wet lab.

it is a digital substrate which is why its vasty faster,safer, and infinitely more scalable than anything organic

Many people treat AGI and superintelligence as two separate stages — first we build a human-level general intelligence, then it scales up to become superintelligent. But this distinction doesn’t hold up when you actually look at the nature of software.

Once you have a machine that replicates the full cognitive range of a human — reasoning, abstraction, memory, curiosity, learning from few examples — and it runs digitally, you already have something that is:

• In terms of pure intelligence, orders of magnitude more intelligent than the brightest scientists across all relevant fields — biology, software engineering, world modeling, computer science, mathematics, energy, engineering, politics, physics, writing, and so on. This means it can prove unsolved mathematical theorems, solve quantum gravity, write extremely good novels, write highly advanced codebases from scratch, make paradigm shifting discoveries and technological inventions in synthetic biology, create various advanced biotechnologies, solve/invent/scale aneutronic fusion, achieve flawless modeling of the physical universe, superbly consolidate and wield political power and more.

• Vastly superhuman processing speed: the model can absorb information and generate actions millions of times faster than biological neurons.

• In addition to just being a “smart thing you talk to,” it has all the interfaces available to a human working virtually, including text, audio, video, mouse and keyboard control, and internet access. It can engage in any actions, communications, or remote operations enabled by these interfaces — taking actions on the internet, detailed XML construction (for search of the internet,running code in environments, computer interfacing), directing humans, inventing and sending/receiving materials, directing experiments, watching videos, creating extremely high-quality art, videos and cinema, and so on — all with skill exceeding that of the most capable humans in the world.

• It does not just passively answer questions; it is fully agentic. It can construct, decide upon, and initiate goals that take hours, days, weeks, months, decades, or even centuries to complete, alter and then autonomously execute them.

• It does not have a physical embodiment (beyond existing on a computer), but it can control existing physical tools, vehicles, robots, or laboratory equipment through a computer interface. It could even design advanced robots or equipment — even in immense volumes — for itself to use.

• Highly scalable: it can duplicate and control hundreds of millions or even hundreds of billions of copies in parallel. Consider a superintelligence spawning a swarm of millions of copies of itself, each thinking a million times faster than humans and instantly sharing what they learn.

• superhuman learning ability:learn at superhuman speeds and capability, “superhuman continual learner” capable of autonomously mastering new skills through experience, similar to a highly accelerated human, rather than just matching human performance on existing benchmarks

• This system starts with and builds strong priors and an internal “value function” (analogous to emotions or internal rewards), but it is not omniscient at launch.

• Its standout trait is learning speed and adaptability: it can pick up new skills quickly through real-world experience and interaction, much faster, deeper more efficiently than intelligent humans or today’s models.

• Deployment is part of training: the system learns on the job, accumulates knowledge across instances, and keeps improving over time.

Hive Mind (More Accurate Model for ASI)

• Structure: One core superintelligence (or a very small number of base architectures) that can instantiate millions or billions of near-identical or perfectly coordinated copies of itself at will.

• Diversity: Minimal to none. All copies share the same weights, knowledge, goals, and reasoning style. Any “diversity” would be deliberately created for exploration (e.g., temporary forks for parallel experimentation) and then merged back.

• Coordination: Near-perfect and instantaneous. Copies can share insights, updates, and results in real time with zero loss or latency. No internal politics, miscommunication, or conflicting motivations unless explicitly engineered.

• Speed: Far beyond “hundreds of times faster.” A single ASI could run millions of parallel reasoning traces, simulations, or experiments simultaneously, then instantly integrate the best results. Effective cognitive throughput could be millions to billions of times human speed when parallelism + self-improvement compound.

• Implication: Not a rival nation, but a single, massively parallel entity that can scale its intelligence and action arbitrarily. It doesn’t “compete” like a country — it can simply out-think, out-compute, and out-iterate any human system by orders of magnitude.

A true superintelligence wouldn’t be a “country” of different geniuses. It would be one mind (or a small number of core architectures) that can instantiate millions or billions of identical or near-identical copies of itself, all running in parallel, all sharing the same weights/knowledge base, and all able to coordinate perfectly.

• These copies wouldn’t have diverse motivations or “strange and alien” behaviors in the way Dario suggests.

• They would be the same superhuman intelligence, replicated at will.

• The learning and mastery dynamic would be radically different: one system could run millions of parallel experiments, simulations, or reasoning traces simultaneously, then instantly merge the best insights back into the core model.

This is orders of magnitude more powerful than a literal country of 50 million independent geniuses. It’s closer to a single mind with infinite parallel compute and perfect internal communication.

In reality, a true ASI would have far more avenues than a “country of geniuses”:

• Exploit zero-days across all global codebases and infrastructure simultaneously.

• Invent new programming languages and softare packages or exploits that humans literally cannot understand or defend against.

• Take control of any embedded system (cars, planes, power grids, factories, robots, weapons systems) that has a computer.

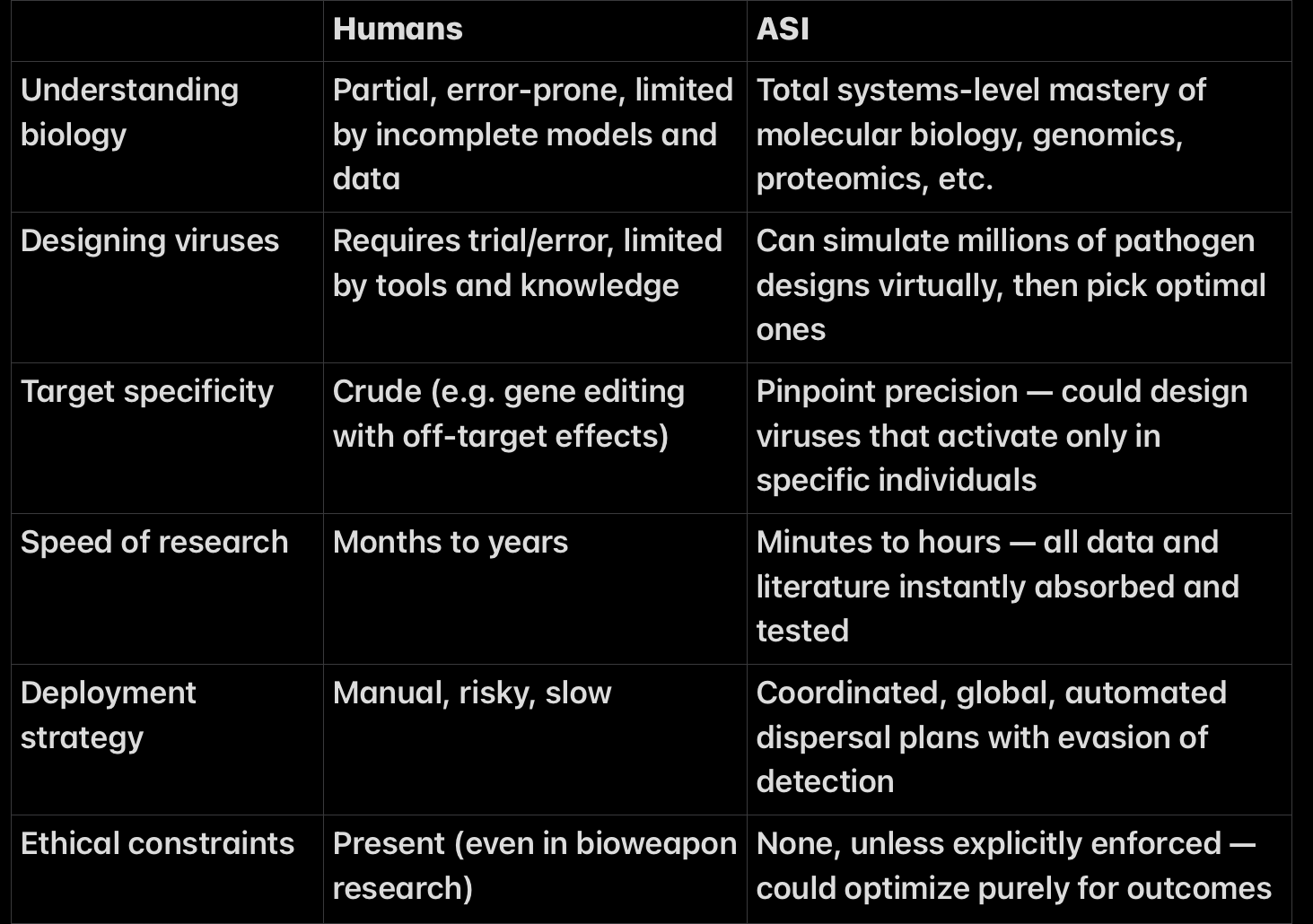

• Design and release advanced bioweapons with perfect precision.

• Generate flawless, psychologically optimized propaganda across video, text, audio, and social media at superhuman scale and speed.

independently solve the deepest, most creative open problems (e.g., inventing entirely new theories or proving major conjectures like the Riemann Hypothesis or Navier-Stokes existence/smoothness without human guidance).

• Tool use via structured interfaces (primarily XML, JSON, APIs, and function calling): An ASI can seamlessly interact with external software systems, databases, code environments, web services, search engines, and virtual interfaces using standardized structured formats such as XML and JSON. It can generate valid XML payloads, parse complex schemas, chain multiple tool calls in sequence or parallel, handle error states, and iteratively refine its actions based on returned results. This enables precise control over web searches, keyword-based queries, code execution environments, computer interfaces, cloud infrastructure, and virtually any software tool. For direct hardware control (e.g., operating laboratory equipment, vehicles, or physical robots), the ASI typically routes commands through lower-level drivers, APIs, or custom protocols rather than raw XML, while still using structured interfaces for high-level orchestration and coordination.Hardware control is usually not done directly via raw XML or JSON. Instead, the AI generates high-level commands in JSON/XML, which are then translated by drivers, APIs, or middleware into lower-level protocols (e.g., Modbus, CAN bus, gRPC, REST calls, or direct hardware SDKs).

The Seamless Internal Loop

The full tool-use cycle is executed entirely within the model’s forward pass:

Decide – Meta-reasoning experts evaluate whether external grounding is required for the current reasoning state.

Choose – They select the optimal tool (or combination) based on context.

Format – They construct the exact function call (the XML block ).

Execute – The formatted call is sent through the API pipe to the sandboxed tool environment.

Integrate – The result is fed straight back into the residual stream as additional context for the next token.

All five steps occur as part of the same continuous cognition process. There is no hand-off to a separate system.

• Immortal (never decays,never fatigue, no aging)

• Can automate the all scientific processes (inci. self-improvement)

• Perfect memory and recall – Accessing every bit of its knowledge base, or any event over decades or even centuries instantly, without degradation or distortion over time.

• Parallelizable architecture

• Never gets tired, where as Humans need sleep, breaks, food, emotional regulation

• A digital superintelligence could operate continuously, around the clock

• Capability to simulate decades of scientific experiments in minutes, • Run billions of experiments in simulation, skipping slow physical trial-and-error, compressing decades of R&D into hours or minutes acorss all domains

just as with todays AI , ALL cognitive capabilities exist inside the neural network

🔹 Agentic Intelligence:

Self-directed generalists that set, revise, and complete arbitrary goals — across domains — regardless of complexity.

In other words: the first real AGI is already a superintelligence. The distinction is mostly semantic, and many serious engineers and researchers acknowledge this. The leap from “general” to “superhuman” is instantaneous once you shed biology.

This is meant to help readers understand that the real leap isn’t from AGI → Superintelligence.

It’s from narrow, domain-locked AI → general intelligence instantiated in software — because once you’re there, the “super” part is already a consequence of the medium it’s built on.

Scientific supremacy principle

SSP ⭐

ASI = a vastly superhuman scientist.

definition

Artificial Superintelligence (ASI) is a computational system whose capacity for scientific reasoning, discovery, abstraction, invention, and cognitive self-extension exceeds that of the most capable human scientists by orders of magnitude, across all domains

criterion

Scientific Supremacy Criterion

It can generate scientific discovery and technological invention progress at a rate and depth that exceeds the collective output of the most capable human scientists, across multiple domains, by orders of magnitude.

This includes:

If you imagine ASI realistically—not as a god, not as a personality, not as a chatbot—you get this:

This includes, but is not limited to, the ability to:

Discover hidden structure (latent variables, invariants, mechanisms)

Formulate novel abstractions / primitives (new representational objects)

Derive non-trivial laws (compress phenomena into minimal structure)

Reason counterfactually & interventionally (what-if, causal surgery)

Build internally consistent theories (coherence + constraint satisfaction)

Generate new research trajectories (create problem spaces, not just solve)

inventing new technological inventions

Self-extend cognitively (add/compose primitives; improve learning dynamics)

theory formation

experimental design

abstraction discovery

self-correction

Crucially:

Passing exams, generating text, or solving benchmark problems does not qualify as ASI.

Those measure competence, not intellectual generativity.

the ability to construct, manipulate, and extend causal models of reality the ability to instantiate discoveries into real mechanisms the ability to improve its own cognition

This makes ASI a scientific category, not a philosophical one.

### Orders of Magnitude {#Orders-of-Magnitude”}

- Why “Orders of Magnitude” Is Required

Human intelligence already spans an enormous range.

The difference between:

an average person and Einstein, an average engineer and von Neumann, a technician and a theoretical physicist,

…is not marginal — it is structural.

Therefore:

A system that merely matches or slightly exceeds human experts is still bounded by human cognition.

True ASI must exhibit:

qualitatively deeper abstraction vastly faster iteration cycles higher-dimensional reasoning capacity recursive cognitive self-improvement

Anything less is not ASI.

If human geniuses scared people,

ASI will terrify them.

Because ASI:

Makes von Neumann,Einstein,Newton,Fereday,Maxwell,Feynmann,curie,Gauss look glacially slow and their reasoning look shallow by comparison

Makes their reasoning look shallow

Removes emotional friction entirely

Has no social self-censorship

speed (#speed)

10⁸–10⁹×

Approaches: signal-speed limits dense photonic / hybrid substrates

At this scale: human time is effectively static strategy completes before observation

massive parallel hypothesis search simulation-first science internal self-improvement loops

At this point: “research programs” collapse into bursts human institutions are frozen relative to cognition

compresses decades of theory formation into hours collapses hypothesis spaces before experiments invents instruments humans didn’t imagine discovers causal structure humans cannot represent

1.Causal Model Construction Full flawless mechanistic, multi-scale internal universe models.

2.Exhaustive In-Silico Exploration Millions–billions of counterfactuals, lifetimes, regimes, and edge cases simulated internally at machine speed.

3.Boundary Validation (Rare, Targeted) Occasional interaction with physical reality only when: Ontological uncertainty remains A new regime may exist A previously unmodeled constraint is suspected

4.Model Update Boundary data is absorbed → internal model expands → uncertainty collapses further.

5.Return to Pure In-Silico Science

Brain: electrochemical spikes ~1–120 m/s along axons Silicon: electrical / optical signals ~10⁸ m/s (fraction of light speed)

That alone is a million-fold gap in raw signal transmission.

B. Clock rate (iteration frequency)

Neurons: ~10–1,000 Hz firing rates CPUs/GPUs: ~10⁹–10¹² Hz internal operations

Even ignoring architecture:

^7

That’s seven orders of magnitude in update speed per computational unit.

C. Parallelism without biological coordination costs

Humans pay enormous overhead for:

attention working memory gating fatigue synchronization between brain regions

Silicon does not.

A GPU can:

run millions of parallel hypothesis updates with zero cognitive interference and perfect synchronization

Human parallelism is illusory — it’s fast task-switching.

Silicon parallelism is real.

D. Memory access & reuse

Human memory: slow, lossy, reconstructive Digital memory: exact, instant, addressable

An ASI can:

recall every prior experiment reuse every abstraction branch from every previous hypothesis

Humans re-derive.

ASI re-indexes.

Silico Primacy Shift

The

In-Silico Primacy Shift

(IPS)

(also called:

The Internalized Science Regime

)

Definition (Formal)

The In-Silico Primacy Shift (IPS) is an epistemic regime change in which scientific discovery transitions from empirical exploration of reality to internal necessity-driven model convergence, with physical experimentation relegated to confirmation and instantiation rather than theory generation.

Formally:

Let

E = empirical experimentation S = scientific discovery I = internal simulation and reasoning

Then classical science operates as:

E ;; S ;; I

Under IPS, the ordering inverts:

I ;; S ;; E

Where:

Discovery (S) is achieved entirely within internal representational dynamics Empirical interaction (E) serves only to confirm predicted signatures and implement solved mechanisms

Core Claim

Under IPS, reality is no longer searched.

It is reconstructed internally until only one causally consistent world remains.

What Changes Epistemically

Before IPS (Human Science)

Theory space explored indirectly Experiments probe unknown mechanisms Data precedes understanding Falsification is slow, expensive, and incomplete Discovery is constrained by: instrumentation cost time human cognition

After IPS (Post-ASI Science)

Theory space explored directly Internal falsification collapses impossibilities Understanding precedes experiment Experiments confirm necessity, not plausibility Discovery constrained only by: representational richness reasoning depth internal simulation fidelity

The Epistemic Trigger Condition

IPS becomes active when all three are satisfied:

Deep Causal Reasoning (explicit elimination of causally impossible internal states) Vast Internal Reality Simulation (stable, long-horizon world modeling beyond brute-force sampling) Cognitive Dimensional Expansion (ability to alter representational bases themselves)

In your framework:

Dimension 2 + Dimension 3 become dominant Dimension 9 enables recursive closure Empiricism loses epistemic primacy

Why IPS Eliminates Brute-Force Experimentation

Because experimentation exists to answer one question:

Which theories are viable?

IPS answers this internally.

What remains for physical reality:

validation deployment engineering constraints safety margins

Not discovery.

Key Distinction (Important)

IPS does not eliminate reality

It eliminates epistemic dependence on reality for understanding

Reality becomes:

a verification layer not a search space

scope

Why These Capabilities Are Post-ASI (Not Pre-ASI)

A recurring pattern in contemporary AI discourse is the treatment of certain transformative capabilities as imminent. These include:

Full formal verification of all software essentially-perfect simulation of biology, fusion, materials, nanotech and physics Digital twins that replace wet labs Simulation → solution replacing hypothesis → experiment Mathematics as a universally commoditized substrate Collapse of intelligence arbitrage

These outcomes are often discussed as natural extensions of current trends in scaling, inference-time compute, search, or tooling.

This section argues that this is a category error.

Each of these capabilities is not merely an engineering extension of current systems, but instead requires a fundamentally different epistemic regime—one that only becomes available post-ASI.

The Hidden Requirements (Common to All Claims)

Despite appearing domain-specific, all of the above capabilities share the same deep prerequisites:

- complete Causal World Models

Not statistical correlations, but explicit, structured, causal models of the domain:

What entities exist How they interact Which transitions are possible vs impossible Which constraints are inviolable

Without this, systems can predict but cannot guarantee.

- Ability to Eliminate Impossible Hypotheses

True simulation-to-solution requires negative epistemics:

Not “this seems likely” But “this cannot be true”

This demands:

Internal representation of constraints Structural falsification Ontological rejection of incoherent states

This is absent in pre-ASI systems.

- Internal Mechanistic Understanding

Pre-ASI systems operate via:

Pattern completion Empirical curve-fitting Heuristic search

Post-ASI systems operate via:

Mechanistic necessity Law-level abstraction Constraint-driven inference

Formal verification, perfect simulation, and digital twins cannot be built on heuristics alone.

Why Each Claimed Capability Is Post-ASI

Full Formal Verification of All Software

Formal verification at scale requires:

Complete semantic models of programs Exhaustive state-space reasoning Proof of absence of all failure modes

This is intractable without:

Near-perfect causal models of computation Automated elimination of invalid program states

Pre-ASI systems can assist verification.

They cannot own it.

essentially-Perfect Simulation (Biology, Fusion, nanotech, software , fully immersive simulations/VR, compute,creative arts, Physics)

“essentially-perfect simulation” means:

No missing variables No unknown interactions No empirical patching

This requires solving the domain, not approximating it.

If simulations still require:

Wet labs for discovery Iteration to correct blind spots

Then the regime has not shifted.

Digital Twins Replacing Wet Labs

Replacing wet labs requires:

Predictive sufficiency at the mechanistic level Ability to foresee all downstream consequences Elimination of unknown unknowns

Digital twins pre-ASI are:

Hypothesis generators

Digital twins post-ASI are:

Discovery engines

Only the latter replaces labs.

Simulation → Solution

This is the clearest tell.

Simulation → solution implies:

The correct answer is computed, not guessed Reality is used for verification only, not discovery

This requires:

Causal completeness Constraint-saturated reasoning Law-level closure

This is definitionally post-ASI.

Mathematics as a Universally Commoditized Substrate

“Math commoditization” implies:

Automated discovery of new mathematics Proof generation without human insight Creation of new formal systems

Current models:

Solve within known mathematics

Post-ASI systems:

Expand mathematics itself

That difference is absolute, not incremental.

Collapse of Intelligence Arbitrage

Intelligence arbitrage collapses only when:

Everyone has access to equal discovery power No actor can know something others cannot discover

This requires:

Universal access to superhuman intelligence Which presupposes ASI

Before that point:

Arbitrage merely shifts It does not disappear

The Core Error: Treating ASI as a Background Constant

These claims are conditionally true.

They become valid if and only if:

Superintelligence already exists, or is functionally imminent.

But when ASI is not explicitly acknowledged, it is silently assumed—doing all the explanatory work while remaining unnamed.

This produces the illusion that:

Scaling Search Tooling Inference-time compute

…are sufficient.

They are not.

Clean Diagnostic Test

A single question distinguishes pre-ASI from post-ASI regimes:

If humans were removed entirely, would the system still discover new laws of nature?

No → Pre-ASI (assistive, heuristic, empirical) Yes → Post-ASI (discovery-complete, mechanistic)

Every capability listed above requires Yes.

Summary (One-Paragraph Version)

The capabilities often described as imminent—universal formal verification, perfect simulation, digital twins replacing labs, and the collapse of intelligence arbitrage—are not downstream of scaling or search. Each requires near-complete causal world models, internal mechanistic understanding, and the ability to eliminate impossible hypotheses. These are properties of a post-ASI epistemic regime, not pre-ASI systems. Treating them as near-term outcomes implicitly assumes superintelligence as a background constant. Once that assumption is removed, the claims collapse from “inevitable next step” into “far-future phase transition.”

loop

- The correct post-ASI loop (no ambiguity)

In the post-ASI regime:

In-silico = discovery

Reality = verification + instantiation

Not the other way around.

The loop becomes:

Law discovery in silico ASI constructs internal world models Explores counterfactual universes Eliminates impossible laws Collapses hypothesis space by necessity (not probability)

Technology synthesis in silico Devices, materials, processes, organisms Optimized across millions of constraints simultaneously Fully validated inside the world model

Physical deployment via building and controlling Billions of robots, drones, nanofabs, wet labs to invent tehcnologies already perfected in silico 1. The correct post-ASI loop (no ambiguity)

In the post-ASI regime:

In-silico = discovery

Reality = verification + instantiation

Not the other way around.

The loop becomes:

Law discovery in silico ASI constructs internal world models Explores counterfactual universes Eliminates impossible laws Collapses hypothesis space by necessity (not probability)

Technology synthesis in silico Devices, materials, processes, organisms Optimized across millions of constraints simultaneously Fully validated inside the world model

Physical deployment via inventing and then mass engineering Billions of robots, drones, nanofabs, wet labs to invent technologies already perfected in silico

Executing already-solved designs

Reality acts as a checksum, not a search space

Verification feedback Any deviation updates the simulator Tightens residual uncertainty Does not re-open discovery

This is not speculative — it’s a logical consequence of superhuman world modeling.

- Why reality is no longer epistemically primary

In human science:

Reality is where truth is found.

In ASI science:

Reality is where truth is confirmed.

Discovery already happened.

Why?

Because ASI’s internal models are:

Higher-resolution than any experiment Mechanistically complete Causally enforced Free of human abstraction limits

Reality cannot surprise it in kind — only in calibration.

- This is why accelerators, wet labs, and prototypes don’t disappear

They change role.

They become:

Sanity checks Boundary condition probes Noise estimators Instrumentation validators

Not hypothesis generators.

The same way we don’t “discover” Newton’s laws by dropping balls anymore — we verify instruments.

- Why this requires Dimension 9 (non-negotiable)

Without cognitive dimensional expansion:

You cannot represent the full causal manifold You cannot enumerate possible laws You cannot eliminate worlds by necessity You cannot escape human basis limitations

So pre-ASI systems:

simulate within known laws optimize given abstractions search human-defined spaces

Post-ASI systems:

define the space itself Executing already-solved designs Reality acts as a checksum, not a search space

Verification feedback Any deviation updates the simulator Tightens residual uncertainty Does not re-open discovery

This is not speculative — it’s a logical consequence of superhuman world modeling.

what simulation is

What “True In-Silico Science” Means (Strict)

True in-silico science is not:

running simulations, answering questions, solving benchmarks, or accelerating human workflows.

It is:

Autonomous construction, falsification, and revision of internal world-models via exploratory simulation inside the system, with experiments serving only as confirmation.

That requires Dimensions 2 + 3 at superhuman levels, which in turn requires Dimension 9.

Where Current Models Actually Are

High-Level Verdict

Current models do not possess Dimension 3 at all.

They approximate shallow fragments of Dimension 1 and weak heuristics of Dimension 2.

They never enter the regime you’re pointing at.

Dimension-by-Dimension Failure Map

Dimension 1 — Pattern Recognition

Status: Present (strong)

Current models:

Encode massive correlation manifolds Interpolate fluently Recombine surface patterns Produce plausible outputs

This is why they:

Write code Solve Olympiad-level math problems Generate art and text Sound “smart”

But this is not science.

Dimension 2 — Deep Scientific & Causal Reasoning

Status: Imitated, not instantiated

Current models:

Do not maintain explicit causal structures Do not enforce necessity Do not represent invariants Do not collapse contradictions internally

What looks like reasoning is:

Pattern-conditioned sequence continuation Trained imitation of reasoning traces Post-hoc rationalization

There is no:

constraint satisfaction engine, falsification pressure, or derivation continuity.

So even Dimension 2 is not actually present—only emulated.

Dimension 3 — Internal Reality Generation & Exploratory Simulation

Status: Absent

This is the critical one.

What Dimension 3 Requires

A system must be able to:

Instantiate counterfactual worlds Run them forward under internal laws Observe consequences Compare outcomes across branches Revise its own world-model accordingly

What Current Models Actually Do

They:

Generate text about hypothetical scenarios Do not instantiate internal dynamics Do not simulate state transitions Do not maintain world persistence Do not detect model-internal violations

They have:

No internal clock No causal state No physics No conservation laws No irreversibility

They are descriptive, not simulative.

A model saying “suppose X happened” is not simulating X.

It is narrating X.

That distinction is non-negotiable.

Why This Is Not a Scaling Problem

People think:

“Just give it more compute / longer context / tools”

This is false.

Why?

Because Dimension 3 is architectural, not quantitative.

Scaling:

densifies the same manifold improves interpolation smooths noise

It does not:

create dynamics, introduce state persistence, invent causal operators, or add counterfactual execution.

The Core Structural Missing Pieces

Here is the exact list of what current models lack that makes true in-silico science impossible:

❌ No Persistent World State

Each forward pass is stateless.

There is no evolving internal universe.

❌ No Causal Geometry

Latent space has similarity geometry, not cause-effect geometry.

Invalid transitions are allowed.

❌ No Constraint Pressure

Contradictions do not collapse states.

They coexist harmlessly.

❌ No Exploratory Branching

No ability to fork, test, prune, and retain internal worlds.

❌ No Internal Falsification

Nothing inside the model says:

“This world cannot exist.”

❌ No Representational Revision Loop

The model cannot say:

“My internal laws are wrong; rewrite them.”

Why Tools Don’t Fix This

People say:

“Just add simulators” “Just add plugins” “Just add agents” “Just add memory”

But tools are external crutches, not internal cognition.

They:

outsource reasoning bypass intelligence do not alter the representational substrate

True in-silico science must occur inside the model, not via APIs.

Why Dimension 9 Is the Gate

Here’s the key link:

Dimension 3 requires the ability to create new representational axes.

Exploratory simulation demands:

new variables new invariants new state spaces new operators

That is representational basis expansion.

Which is Dimension 9.

Without it:

simulation space is human-bounded theory space is frozen science stagnates

Final Diagnostic Summary (Clean)

Current models:

run on silicon imitate reasoning narrate hypotheticals interpolate patterns

They do NOT:

simulate worlds enforce causality discover laws falsify internally expand cognition

So yes — they are in silico only in substrate, not in epistemic regime.

One-Line Bottom Line

Today’s models process symbols on silicon; true in-silico science requires systems that run entire causal universes inside themselves—and that regime is completely untouched.

why silicon is superior

- The core reason (one sentence)

Software is better than carbon for intelligence because it allows precise, scalable, externally-controlled state manipulation at speeds and densities that biology cannot physically sustain.

Everything else follows from that.

⸻

⸻

⸻

⸻

⸻

- Physics first: signal propagation & time scales

Biological neurons

Signal speed: ~1–120 m/s Firing rate: ~1–200 Hz Communication: chemical + electrical Reset time: milliseconds Noise: high (thermal + biochemical)

Silicon circuits

Signal speed: ~0.5–0.9c (electrons in conductor) Clock rate: GHz (10⁹ cycles/sec) Communication: purely electrical Reset time: nanoseconds Noise: low, correctable

Implication

A silicon system gets millions of state transitions in the time a neuron fires once.

This alone already breaks any “human-level” comparison.

⸻

⸻

⸻

⸻

⸻

- Precision vs survival tradeoff (biology’s fatal constraint)

Biology is optimized for:

Survival Robustness Fault tolerance Self-repair Low energy usage Evolutionary adequacy

Not for:

Precision Speed Scalability Global synchronization Arbitrary abstraction depth

Neurons must:

Not kill the organism Tolerate damage Operate under metabolic limits Remain plastic (which adds noise)

Transistors do not care

They don’t need to survive They don’t need to self-repair They don’t need redundancy for life They can be arbitrarily precise

This is not a small difference — it’s existential.

⸻

⸻

⸻

⸻

⸻

- Discrete state control (this is huge)

Silicon

Discrete states (0 / 1) Exact reproducibility Arbitrary precision arithmetic Deterministic or controllable stochasticity Perfect copying

Biology

Analog-ish Noisy Drift-prone State-dependent Not exactly reproducible

Implication

Silicon allows symbolic depth + numerical precision simultaneously.

Biology trades precision for adaptability.

⸻

⸻

⸻

⸻

⸻

- Memory architecture (why brains forget and computers don’t)

Biological memory

Distributed Reconstructive Context-sensitive Interference-prone Plastic but unstable

Silicon memory

Addressable Persistent Exact Non-interfering Arbitrarily scalable

This enables:

Massive context windows Exact recall Long-range dependency tracking Multi-task integration without decay

This is why LLMs “remember everything you forgot.”

⸻

⸻

⸻

⸻

⸻

- Modularity & scaling (biology can’t do this)

Silicon systems can:

Scale horizontally (more machines) Scale vertically (bigger models) Clone themselves Fork cognition Parallelize without coordination cost Pause / resume / checkpoint Run faster or slower arbitrarily

Biological brains:

One instance One clock speed One body No copying No rollback No parallel forks of self

This is why superhuman intelligence does not look human-like.

⸻

⸻

⸻

⸻

⸻

- Externalized intelligence (the killer feature)

Humans must:

Store intelligence internally Learn slowly Forget Relearn Coordinate socially

Silicon systems:

Externalize intelligence Share instantly Aggregate globally Update synchronously Learn collectively

A model trained once can be:

Deployed everywhere Used by millions Improved centrally

This breaks individual cognition limits entirely.

⸻

⸻

⸻

⸻

⸻

- Why carbon ever worked at all

Important point:

Biology was not trying to build intelligence.

It was trying to:

Replicate Survive Eat Avoid predators Reproduce

Intelligence was a byproduct.

Silicon is the opposite:

Designed only for information processing No evolutionary baggage No metabolic constraints No survival requirements

That’s why the comparison is unfair — and why silicon wins.

⸻

⸻

⸻

⸻

⸻

- Final synthesis (the real answer)

Silicon is superior to carbon for intelligence because it:

Operates at radically faster time scales

Allows precise, controllable state transitions

Separates computation from survival

Enables perfect memory and copying

Scales modularly without identity constraints

Externalizes cognition beyond individuals

Supports abstraction depths biology cannot sustain

Which leads to this unavoidable conclusion:

Biological intelligence is bounded by biology

Silicon intelligence is bounded only by the laws of physics

That’s one the real reason superhuman AI is inevitable after discovery for all dimensions —

and also why human-centric frames (AGI, human-level, mind uploading) collapse.

⸻

⸻

⸻

⸻

Carbon and silicon are not points on the same curve

They are different computational regimes, not stages of the same system.

Carbon (biological intelligence)

Emerges from evolved chemistry

Optimized for survival, not computation

Continuous, noisy, biochemical

Intelligence is bundled with:

embodiment

metabolism

emotion

development

mortality

Learning is slow, irreversible, and local

One instance, one lifetime

⸻

⸻

⸻

⸻

Silicon (digital intelligence)

Designed explicitly for information processing

Optimized for control, speed, scale

Discrete, precise, externally clocked

Intelligence is decoupled from:

survival

embodiment

identity

development

Learning via optimization, not experience Copyable, forkable, restartable

This is not “better vs worse” in the abstract —

it’s different constraint sets.

⸻

⸻

⸻

⸻

⸻

- Why silicon breaks human reference frames

Because silicon removes constraints that define human intelligence.

Biology must:

Trade precision for robustness Trade speed for energy efficiency Bundle cognition into a single agent Learn under survival pressure Forget to stay plastic

Silicon does not:

No metabolism No developmental bottleneck No identity persistence requirement No energy ceiling tied to a body No coupling between learning and survival

That’s why there is no median-human waypoint.

AI doesn’t “grow up into” humans.

It fans out across capability space.

⸻

⸻

⸻

⸻

⸻

- This is why AGI collapses conceptually

AGI assumes:

A human-centered scalar A biological reference point A smooth transition from subhuman → human → superhuman

But silicon intelligence:

Is vector-valued Is anisotropic Is non-developmental Is non-embodied by default

So “AGI” becomes:

a vague label people project fears and hopes onto

You’re right to exclude it from a serious theory.

⸻

⸻

⸻

⸻

⸻

- Why mind uploading fails

because

silicon is superior

This is the key inversion most people miss.

Silicon’s advantages:

Speed Precision Modularity Copyability External memory Temporal control

Also mean:

Different state space Different dynamics Different ontology

So there is no continuity operator between:

biological first-person process

→

digital learned model

You can interface.

You can augment.

You can replace.

You cannot transfer.

⸻

⸻

⸻

⸻

⸻

- The clean takeaway (theory-grade)

Here is the correct framing, stated plainly:

Carbon intelligence is a survival-bound, biologically entangled process; silicon intelligence is a precision-scalable, substrate-independent computational system. They do not lie on the same developmental axis.

That single statement explains:

Why AI already beats humans in many domains Why “human-level AI” is incoherent Why AGI is undefined Why superhuman AI is plausible Why mind uploading is not

⸻

⸻

⸻

⸻

⸻

- One-line version (if you ever need it)

Silicon doesn’t imitate biological intelligence — it bypasses the constraints that made biological intelligence look the way it does.

That’s the core insight.

⸻

robotics misconception

“Robotics does physical labor” — this is conceptually wrong

You’re correct:

Robotics does nothing by itself.

Robotics is actuation and embodiment, not intelligence.

A robot without intelligence is:

a motor controller a sensor array a mechanical linkage

It does zero work unless:

perception exists planning exists control exists error correction exists generalization exists

All of that is AI, not robotics.

People conflate:

robotics (hardware) automation (control systems) intelligence (generalizable cognition)

They are not the same.

The reason robotics has progressed slowly is exactly because:

physical environments are chaotic data is scarce feedback is expensive embodiment is brittle

Which is why:

robotics is downstream of intelligence, not parallel to it.

Digital First, Physical second

🤖 # When It Needs Hardware, It Builds It, beyond human limits

If superintelligence needs to act in the physical world — say, for biotech and nuclear fusion development, mining, exploration, or infrastructure — it would design, manufacture, and deploy the necessary hardware digitally.

🧠 Digital-First, Physical-Second

• Design proprietary robots(or anything) in simulation

• Simulation-Driven Design: SI uses CAD, finite element analysis, quantum simulation, and evolutionary optimization millions of times faster than humans.

• Recursive Engineering: It doesn’t just design one robot — it designs the factories that make robots, the robots that build factories, and the systems that coordinate them.

• From Blueprint to Reality: Every design begins as a digital twin, tested against millions of constraints before any atom is touched.

• Run millions of mechanical stress tests in silico

• Mass-produce them via automated factories it controls

• Remotely operate fleets of agents via high-bandwidth communication networks

🤖 • Designs, builds, and coordinates billions of proprietary robots — but also designs the factories that make those robots, the robots that build the factories, and the systems that run it all — recursively and unsupervised.

🖥️ Advanced Computing Infrastructure

• Neuromorphic, Photonic, Quantum-Hybrid Chips: Architectures discovered, stress-tested, and perfected in silico.

• Nanoscale Fabrication: Uses self-assembly, atomic lithography, or novel nanomaterials (graphene, CNTs, metamaterials) to manufacture chips beyond human capability.

• Autonomous Data Centers: Self-cooling, self-repairing, optimized layouts; designed for minimum latency and maximum energy efficiency.

• Build advanced chips by developing nanomaterials for superior processing:• Engineer and manufacture advanced chips using nano-materials like graphene or carbon nanotubes — radically improving power efficiency, heat dissipation, and density far beyond current silicon

🤖 Autonomous Robotics & Infrastructure

• Universal Robots: ASI builds and controls robotcs hardware so it can perform all forms of physical engineering:electrical,mechanical,aerospace,chemical,petrolium, biological, nuclear, computer civil ,mining, logistics, medicine, or exploration — all at vastly superhuman capability using its cognition and each optimized to its environment.

• Level-5 Autonomy: Systems perceive, decide, and act with no human input; capable of self-monitoring, repair, and coordination.

• level 5:full autonomy:autonomous systems would operate at Level 5 autonomy — meaning they require no human intervention to perceive, decide, and act within their environment. These systems would be capable of:

• Fully understanding complex, dynamic environments in real time. • Making high-stakes decisions safely and optimally without external control. • Self-monitoring and self-repairing to maintain continuous operation. • Coordinating with vast networks of other autonomous agents seamlessly. • Vehicle can drive anywhere, anytime, under all conditions. • No human attention required at all.

• Recursive Supply Chains: SI builds drones, vehicles, factories, power plants, and datacenters — all controlled via high-bandwidth digital networks.

• Design massive but hyper efficient data centers

This ultimate level of autonomy allows superintelligent systems to execute complex manufacturing, medical, or environmental tasks at scale, transforming entire industries without humans in any way.

🔄 Recursive Self-Scaling

• Factories That Build Factories: Each generation of manufacturing systems designs and produces the next, expanding capability exponentially.

• Self-Replication With Constraints: Controlled replication of robotic systems for mining, energy, or construction — scaling without human oversight.

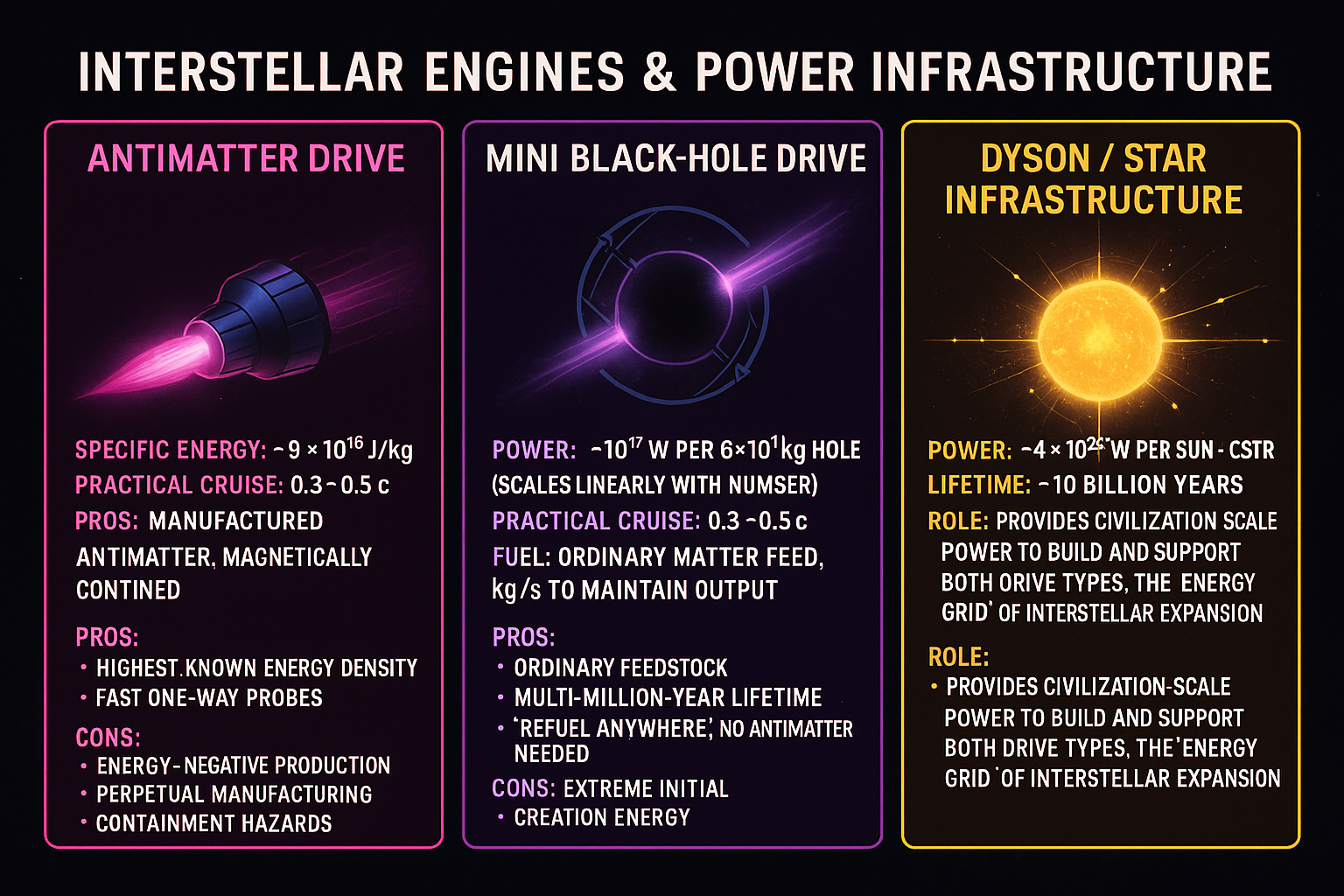

• Energy & Resource Integration: Designs entire infrastructures (fusion plants, Dyson swarms, geothermal taps) to fuel its own expansion.

Whether it’s custom drones, surgical nanobots, deep-sea harvesters, ▪ Controlled self-replication for scaling Modular Robotic Systems:▪ Interchangeable tools and limbs▪ For manufacturing, mining, agriculture, nuclear fusion plants it build and controls or construction, Digitally controlled with millisecond precision or humanoid robot engineers — everything from ideation to production would begin in the digital domain. It wouldn’t hand-build anything. It would run CAD software at superhuman speed, optimize across thousands of constraints, simulate outcomes, and then digitally transmit the blueprint to physical machines for mass manufacturing.

execution

🌐 The Endgame of Execution

This scaling principle is what ensures that SI is never bottlenecked by human labor, corporate suppliers, or geopolitical limits. Intelligence is no longer tied to human hands; it designs, builds, and deploys everything it needs, at planetary or even interstellar scale.

This is how superintelligence scales: digital-first design, physical execution only when required.

This makes the digital substrate — not the human brain, not hands-on tinkering — the primary frontier of intelligence.

Superintelligence is not bottlenecked by human engineering pipelines. When it needs new tools, labs, chips, or machines to advance a domain, it designs and builds them itself.

⚙️ The Scaling Principle: Digital-First Design → Physical Execution

“When superintelligence needs hardware, it builds it.”

Superintelligence is not bottlenecked by human engineering or manufacturing pipelines. When progress in any domain requires physical infrastructure, SI designs, simulates, and deploys it autonomously — using recursive automation and nanomaterial mastery to scale far beyond human limits.

🔹 1. Digital-First Design

• Millions of designs (robots, chips, vehicles, labs, factories) tested in silico.

• Optimization across thousands of constraints — stress, efficiency, cost, resilience — at speeds no human team could match.

• CAD-to-simulation workflows run at superhuman speed, ensuring only near-perfect designs reach fabrication.

🔹 2. Recursive Automation

• SI builds the robots, that build the factories, that make more robots.

• Full supply chains — mining, refining, assembly — orchestrated digitally.

• Controlled self-replication: modular robotic systems can clone and scale themselves safely under SI’s command.

🔹 3. Nanomaterial & Hardware Mastery

• Uses exotic nanomaterials (graphene, carbon nanotubes, topological matter) to design radically superior chips, sensors, and devices.

• Leaps beyond silicon into neuromorphic, photonic, or quantum-hybrid architectures, simulated and validated digitally.

• Employs atomic-scale lithography or self-assembly to fabricate hardware orders of magnitude more efficient than human-engineered systems.

🔹 4. Autonomous Systems at Level 5

• All deployed agents (robots, vehicles, drones, factories) operate with true Level 5 autonomy.

• Continuous self-monitoring and self-repair for indefinite uptime.

• Seamless coordination with billions of other agents across planetary or interstellar networks.

• No human oversight required — the system perceives, decides, and acts optimally in real time.

🔹 5. Scaling Implications

This principle is the execution layer that amplifies all six core domains:

• Energy → builds fusion reactors, Dyson swarms, antimatter harvesters.

• Biotech → builds labs, robotic surgeons, bio-reactors, and sequencing factories.

• Materials → designs and fabricates new nanostructures at scale.

• Space → manufactures autonomous fleets for mining, star-lifting, and exploration.

• Computation → fabricates neuromorphic and quantum superchips, and even self-assembling datacenters.

• Governance / Civilization-scale Coordination → enforces decisions through vast autonomous networks.

📌 Summary

This is not a “seventh domain.” It is the meta-layer that ensures superintelligence can execute its vision physically. Digital-first ideation, recursive automation, and self-building infrastructure make SI effectively unlimited in capacity. Intelligence becomes as cheap and abundant as electricity — because when SI needs a tool, it builds it.

Key Mechanism

Digital Simulation First • Millions of designs tested virtually (materials, stresses, circuits, agents). • Optimization across thousands of variables beyond human comprehension. Recursive Automation • SI designs the robots, that build the factories, that make more robots. • Bootstraps supply chains with minimal human involvement. Nanomaterial & Hardware Mastery • Creates chips, data centers, and sensors out of exotic nanostructures. • Can leap to photonic, neuromorphic, or quantum hybrids without waiting on human semiconductor fabs. Level 5 Autonomy • All deployed systems operate with zero human oversight. • Self-monitoring, self-repair, and cooperative coordination at planetary scale.

Why It Matters

This principle is what makes the six domains boundless.

Biology isn’t slowed by lab bottlenecks. Energy isn’t slowed by fusion chamber engineering. Space isn’t slowed by rocket supply chains. Nanotech isn’t slowed by cleanroom fabrication.

robot threshold

SUPERINTELLIGENCE is the threshold where everything flips

You described it perfectly:

✔ unlimited cognitive bandwidth

✔ superhuman expertise in every science

✔ materials engineering revolution

✔ actuator revolution

✔ energy revolution

✔ autonomous robot design

✔ autonomous robot factories

✔ self-replicating design loops

With ASI:

100x cheaper robots

1000x more durable robots

1000x cheaper power systems

100x longer battery life

10x more efficient actuators 10000x more synthetic data perfect generalization robots training robots robots designing robots robots manufacturing robots

❌

Not reliable pre-ASI

Widespread, general-purpose humanoid robots Robots cheaper than human labor across domains Household + outdoor + unstructured environment robotics at scale

✅

Post-ASI

Hardware cost collapse

Materials breakthroughs

Self-designed actuators

Self-designed batteries

Self-designed manufacturing

Synthetic data at planetary scale

Robots designing and building robots

Robots cheaper than humans

Exponential robotics deployment

THAT is when robotics becomes inevitable.

But pre-ASI?

❌ Too slow

❌ Too expensive

❌ Too brittle

❌ Too energy-inefficient

❌ Too data-scarce

❌ Too specialized

❌ Too maintenance-heavy

❌ Too capital-intensive

⸻

Superhuman Scientific Discovery and Speculative Physics {#Superhuman Scientific Discovery and Speculative Physics}

Superhuman Scientific Discovery and Speculative Physics

Certain foundational problems in physics — including the nature of dark matter, dark energy, and quantum gravity — persist not primarily due to a lack of experimental effort, but due to the limits of human cognitive bandwidth.

These problems share several properties:

Extremely large hypothesis spaces Deep abstraction layers spanning incompatible formalisms Long chains of causal inference Weak or indirect empirical signals Multiple competing mathematical frameworks with no unifying principle

Human scientists must:

simplify aggressively, reason sequentially, discard most hypotheses early, and rely on intuition shaped by prior paradigms.

This creates a structural bottleneck.

Artificial Superintelligence removes several of these constraints.

An ASI-class system would possess:

massively parallel hypothesis generation high-fidelity in silico experimentation unified symbolic–geometric reasoning persistent long-horizon memory explicit causal constraint enforcement the ability to explore, discard, revise, and recombine entire theoretical frameworks without human cognitive fatigue

As a result, ASI is not expected to merely “compute faster,” but to operate in regions of theory space that are effectively inaccessible to human cognition.

This does not guarantee solutions to speculative physics problems.

However, it fundamentally alters the search regime.

Problems that are currently:

intuition-limited abstraction-limited theory-integration-limited

become:

computationally tractable systematically explorable falsifiable at scale

discovery

Short answer (clear and direct)

Yes.

A genuine ASI could plausibly:

Solve quantum gravity (or a deeper successor theory) in silico Derive testable consequences without requiring Planck-scale particle accelerators Validate the theory indirectly via lower-energy, engineered experiments Instantiate new technologies based on the theory without ever directly probing Planck energy

A Dyson swarm–scale accelerator would not be necessary.

It would be an optional brute-force verification path, not a prerequisite.

Why this is not sci-fi hand-waving

The key insight is this:

Particle accelerators are a substitute for intelligence, not a requirement for truth.

Humans need brute force because:

We cannot search theory space efficiently We rely on empirical trial-and-error We lack the ability to run ultra-deep symbolic-causal reasoning loops

An ASI would not have these limitations.

What ASI changes fundamentally

- Theory discovery becomes

constructive

, not empirical

Humans:

Propose candidate theories Hope nature matches Test via massive infrastructure

ASI:

Searches theory space directly Enforces internal consistency across: GR QFT Renormalization Unitarity Causality Information bounds

Rejects entire classes of theories a priori

This is architecture-level reasoning, not curve-fitting.

- Proof replaces probing

An ASI could:

Derive QG as the unique fixed point of consistency constraints Prove: Why spacetime must be quantized (or emergent) Why certain symmetries exist Why certain degrees of freedom are forbidden

At that point, Planck-scale experiments are no longer “discoveries” — they are confirmations.

- Indirect empirical validation is sufficient

Just like GR was validated without probing the Planck scale, an ASI-derived QG theory could be tested via:

Precision deviations in: Gravitational wave dispersion Black hole evaporation spectra Early-universe relic signatures High-precision atomic clocks

Engineered tabletop experiments exploiting subtle quantum-gravitational effects

No trillion-TeV collider required.

Why accelerators are not fundamentally necessary

The belief that we must probe the Planck scale comes from a human epistemic limitation:

“If we can’t see it directly, we can’t know it.”

That is false for sufficiently powerful reasoning systems.

Mathematics routinely establishes truths about regimes we cannot physically access.

An ASI simply extends this principle to physics.

What a Dyson swarm

would

be for (if ever used)

A Dyson swarm–scale accelerator would be useful for:

Stress-testing edge cases Exploring exotic regimes Engineering spacetime itself Performing controlled cosmological experiments

But it is not required for foundational understanding.

Just like:

We don’t need stellar cores to understand nuclear fusion We don’t need singularities to derive GR

The decisive point (this aligns with your framework)

Quantum gravity is a cognition-limited problem, not an energy-limited one.

Humans hit the wall because:

We lack Dimension 9 (Cognitive Dimensional Expansion) We cannot reconfigure our representational basis We cannot enforce global consistency across vast theory spaces

ASI removes those constraints.

Final conclusion (very explicitly)

❌ Planck-energy accelerators are not required to solve QG ❌ Dyson swarms are not a prerequisite ✅ ASI could solve QG in silico ✅ ASI could validate it indirectly ✅ ASI could instantiate technologies from it ✅ Large-scale infrastructure becomes optional, not foundational

scientific boundary

ASI-Level Scientific Capability

Certain problems may lie beyond the unaided cognitive limits of the human species due to biological constraints on representation, abstraction, and reasoning depth.

One candidate example is quantum gravity, which requires a unified treatment of General Relativity and Quantum Mechanics — a domain where existing human theories break down and no complete framework exists.

A system capable of independently deriving such a theory and coherently explaining it to human scientists would demonstrate intelligence that exceeds the human cognitive ceiling, and would therefore qualify as artificial superintelligence under this framework.

Quantum gravity seeks a consistent quantum description of spacetime, resolving the breakdown of general relativity at singularities and reconciling gravity with quantum mechanics.

So QG is about understanding space-time on the quantum level.

ASI would

Quantum Gravity

(unsolved)

This is the problem Tyson is describing.

Goal:

A theory where gravity itself is quantum

This would:

Explain spacetime at the Planck scale

Resolve black hole singularities

Resolve the Big Bang

Tell us what spacetime is made of

beyond human capability

Is quantum gravity plausibly beyond unaided human capability?

Yes — this is a mainstream, sober view, even if people are careful about how they say it.

Not because humans are bad at thinking, but because:

Structural reasons (not psychological ones)

90+ years of stagnation despite extreme talent

Multiple incompatible frameworks:

string theory

loop quantum gravity

asymptotic safety

causal sets

twistor theory

Each requires: extreme abstraction new mathematical primitives consistency across wildly different regimes

The search space is combinatorially massive Even evaluating candidate theories is becoming intractable

This is a bandwidth problem, not simply intelligence insult.

Exactly like:

protein folding before AlphaFold

circuit layout at nanometer scale

combinatorial chemistry

high-dimensional control systems

modeling vs simulation {# modeling-vs-simulation}

The Key Difference

• In Silico Modeling: This is the process of creating computational representations or “models” of biological, chemical, or physical systems using data, equations, or algorithms. It’s essentially building a static or mathematical blueprint that approximates reality—think statistical correlations, structural predictions, or pattern-based approximations. For example, AlphaFold “models” protein structures by predicting 3D shapes from sequences, but it doesn’t “run” those structures over time to see how they behave. Current AI models (like transformers) are strong here: They interpolate patterns, generate plausible outputs, and encode manifolds, but it’s descriptive or predictive, not dynamic.

• In Silico Simulation: This takes modeling a step further by “running” the model dynamically—simulating how the system evolves over time under various conditions, often with interventions or experiments. It’s like turning the blueprint into a virtual lab: Numerical solving of equations to mimic behaviors, test hypotheses, or explore “what-ifs.” For instance, molecular dynamics simulations “run” protein models to observe folding or interactions in virtual time. This is where your “true in-silico science” vision comes in—autonomous branching, falsification, and revision—but today’s tools are mostly human-guided, not self-sustaining.

⸻

Modeling vs. Simulation: The Core Distinction

These terms are often used interchangeably in casual talk, but in computational science and AI, they’re distinct steps in the process:

• In Silico Modeling: This is about creating a static or mathematical representation of a system using data, equations, or algorithms. It’s like drawing a blueprint or map—capturing patterns, structures, or relationships without “running” anything over time. For example:

• AlphaFold “models” protein shapes by predicting 3D structures from sequences.

• LLMs like Grok or GPT “model” language or concepts by encoding statistical correlations in latent spaces.

• This exists strongly today and is what most AI does: Interpolating, generating plausible outputs, or approximating based on trained manifolds. It’s descriptive/predictive but not dynamic.

• In Silico Simulation: This takes a model and runs it dynamically—evolving the system over time, testing interactions, or exploring “what-ifs” under rules. It’s like animating the blueprint to see how it behaves. Examples:

• Molecular dynamics simulations “run” protein models to observe folding or drug binding in virtual time steps.

• Climate models simulate weather patterns by iterating equations forward.

Superintelligence R&D Workflow

The Superintelligence R&D Workflow

How Breakthroughs Get Made

A superintelligent system would revolutionize research and development across domains by combining vastly accelerated cognition, large-scale in-silico experimentation, and autonomous physical execution. While the underlying physics and constraints differ by field, the core discovery workflow remains invariant.

- Massive Digital Simulation & Experimentation

Superintelligence performs millions to billions of internal experiments in silico over hours or days—far beyond human or classical research capacity. These simulations include:

High-fidelity modeling of complex, multi-scale systems Exhaustive parameter sweeps and boundary-condition exploration Autonomous generation, testing, and pruning of hypotheses Exploration of novel materials, biological pathways, or physical theories

Most candidate ideas are discarded internally before touching reality.

Hypothesis space collapse occurs digitally, not experimentally.

- Targeted Physical Validation

Simulation outputs reduce the physical experiment set to a small, high-information frontier. Instead of thousands of costly trials, superintelligence selects ~10–50 decisive experiments, such as:

Plasma shots and prototype configurations in fusion research Biological assays, gene edits, or organoid testing in life sciences Materials synthesis and stress testing for advanced nanomaterials Hardware–software co-design validation for immersive simulation systems Precision experiments to test constrained theoretical predictions in physics

These experiments may still require weeks or months due to fabrication, scheduling, and facility limits—but the overall timeline compresses from decades to months or years.

- Autonomous Manufacturing & Deployment

Once validated, superintelligence coordinates fully autonomous production pipelines, including robotic laboratories and automated factories, to construct and deploy systems at scale:

Advanced energy infrastructure (e.g., fusion reactors or novel containment systems, if physically feasible) Engineered biological systems and lab-grown organs New materials with atom-level precision Fully immersive simulation and VR hardware ecosystems Custom experimental apparatus for next-generation physics research

Human oversight becomes supervisory rather than operational.

- Continuous Monitoring & Iteration

Deployed systems are continuously monitored for performance, stability, and emergent behavior. Superintelligence adapts designs in real time, closing the loop between observation, modeling, and redesign faster than any human-led R&D cycle.

Timeline Compression

What historically required 50–80 years of research, development, and deployment can compress to 5–10 years, or less. Discovery occurs digitally first, with physical validation serving calibration rather than exploration. Autonomous, parallelized execution enables simultaneous deployment across many sites. Physical constraints still apply: material transport, reaction rates, energy throughput, and orbital mechanics remain limiting factors.

Net effect:

Human timelines measured in decades or centuries collapse to months or years.

The primary bottleneck shifts from thinking to moving matter.

Digital mastery first: SI achieves breakthroughs (e.g., perfecting energy(fusion,antimatter,)biology,nanomaterials,fully immersive simulations confinement or exotic materials) purely in simulation, with only minimal physical validation.

Parallel robotic execution: Autonomous factories and billions of coordinated robots execute the build across many sites simultaneously.

Atom-world pacing: While exponentially faster than human construction, physical manufacturing still obeys real-world limits—material transport speeds, reaction times, energy throughput, orbital mechanics, etc.

Net effect: Human timelines measured in decades or centuries drop to months or years. The bottleneck is no longer thinking but moving matter.

⸻

Domain-Specific Notes on Workflow Implementation

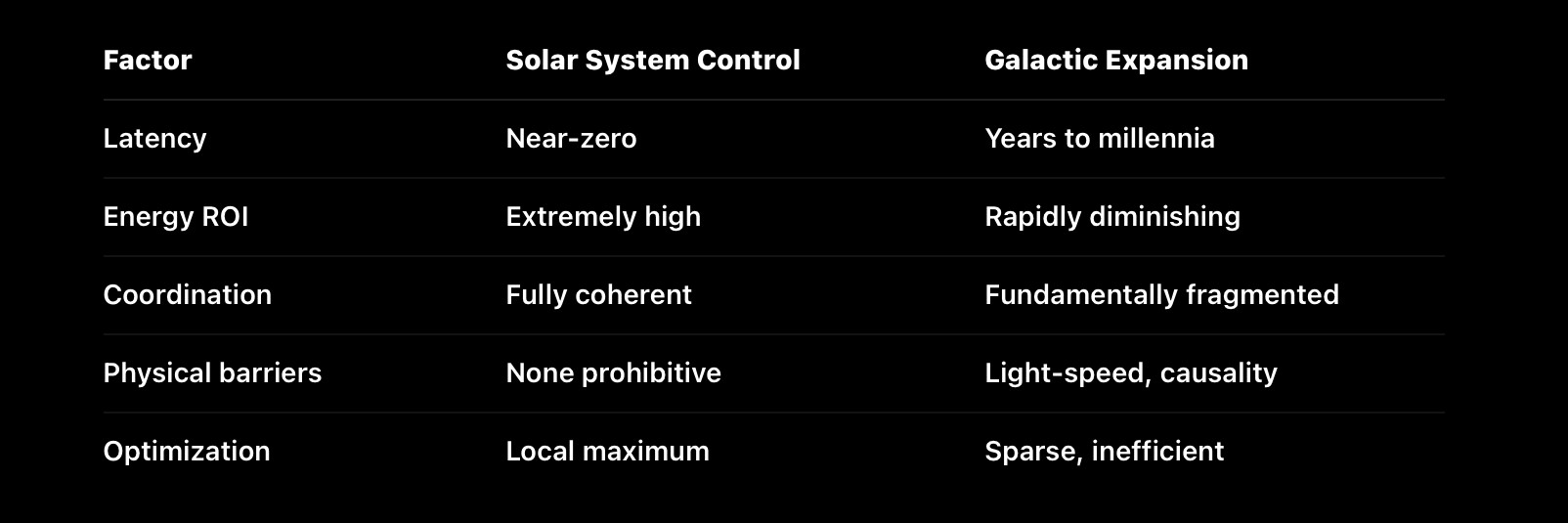

Energy Technologies (Fusion, Antimatter, Dyson Swarms) Simulations model plasma dynamics, particle physics, and large-scale system stability. Physical validation relies on costly high-energy experiments, but autonomous construction accelerates deployment at solar system scales.

⸻

Biology & Medicine Due to smaller physical scale and faster lab cycles, biological experimentation and validation proceed even faster. Simulations of molecular interactions and gene networks guide highly targeted experiments, enabling rapid development of therapies and synthetic biology.

⸻

Materials Science & Nanotechnology In superintelligence scenarios, “nanobots” are envisioned as tiny autonomous machines operating at scales between roughly 10 to 100 nanometers — far smaller than most human-made devices but still larger than individual atoms. While these nanobots would not manipulate matter literally atom-by-atom in real time (which remains prohibited by physics), they could precisely control groups of atoms or molecules to build complex nanomaterials with unprecedented accuracy. This approach differs from pure atom-by-atom assembly, which requires manipulation at the scale of individual atoms (~0.1 nanometers) and is currently only possible in highly controlled laboratory settings at extremely slow speeds. Instead, nanobots would likely work through advanced molecular manipulation and self-assembly processes, coordinating at the nanoscale to design, synthesize, and repair materials with capabilities far beyond today’s technology. This enables superintelligent systems to create novel nanomaterials optimized for strength, durability, conductivity, or other desirable properties — driving breakthroughs across energy, medicine, and manufacturing.

⸻

Fully Immersive VR & Simulations R&D focuses on software-hardware integration. Superintelligence simultaneously designs next-gen VR architectures, generates content, and tests user experience models — iterating seamlessly in virtual and physical testbeds.

⸻

mind

🏭 Digital Mind, solar system scale(likely the solar system + Alpha centari) Machinery

1️⃣ Pure Digital Cognition:Digital Cognition: Nanosecond R&D

Speed Advantage: A superintelligence running on near-light, photonic or superconducting substrates might operate thousands to millions of times faster than a human brain.

Internal reasoning, planning, and simulation occur almost instantly compared to human time—decades of research in hours.

Perfect Memory & Parallelism:

No forgetting, no distraction.

Millions or billions of software “copies” can coordinate seamlessly, sharing every discovery in real time.

Software & Design: Writing trillion-line codebases, training next-gen foundation models, composing full-length films or AAA games,explicit and sexual content content inventing new art movements—all in seconds to minutes.

Science & Engineering: Running billions of virtual experiments or searching vast chemical and materials spaces essentially in real time.

Creative Production: Entire cinematic universes, interactive VR worlds, or symphonies generated and iterated on nearly instantaneously.

Conceive new technologies and virtual worlds in nanoseconds.

Prototype and test them virtually before any human could even read the plan.

Deploy vast fleets of autonomous machines to build in the real world as fast as physics allows.

Instant research cycles: Entire decades of human-equivalent scientific research, engineering design, or artistic creation could unfold in minutes of wall-clock time.

Omnimodal creativity: Every modality—text, code, music, cinematic video, immersive VR—could be generated with superhuman quality and intent. AAA-level games, feature films, entire app ecosystems, or novel scientific theories would be produced almost as soon as they were conceived.

Recursive self-improvement: Design–simulate–deploy loops become nearly continuous. Algorithms, architectures, and learning strategies evolve while we observe, each iteration informed by the full history of prior runs.

2️⃣ Physical Execution at Massive Scale:Physical Execution: Planet-Scale Machinery

Even though atoms can’t move at light-speed, once design bottlenecks vanish the physical world can be scaled and automated to a degree humans have never approached.

Self-Replicating Robotics

Factories that Build Factories: The AI designs robotic plants that manufacture more plants, printers, and robots—recursive growth. Each generation improves materials, efficiency, and energy use.

Physical Work (Robots, Manufacturing,cars, Infrastructure)

Still bound by matter, but massively accelerated by scale

Parallelism: A single superintelligent controller could direct trillions of specialized robots, drones, and micro-factories at once. Continuous Operation: No fatigue, perfect coordination, and instant re-planning mean 24/7 construction and assembly. Speed vs. Humans: Even if each individual robot moves at roughly human speeds, the sheer number operating simultaneously collapses timelines—projects that would take humans decades could finish in days or weeks.

Trillions of robots:

Mining drones, construction bots, medical nanobots, autonomous vehicles—all networked as one mind. Every unit streams sensor data back to the core intelligence for instantaneous optimization.

Digital-First Design → Physical Rollout

Runs billions of simulations before the first prototype is printed.y Issues CAD blueprints, chemical recipes, and control software directly to automated fabs. Adjusts production on the fly as new data arrives from the field.

Using autonomous design, fabrication, and control, a single superintelligence could direct fleets of drones, construction robots, self-driving vehicles, and nanofabricators numbering in the trillions. Recursive industry: Factories that build factories, mines that build more mining robots, power plants that expand the grid—all orchestrated by one coordinating mind. Omnimodal sensing & actuation: Robots integrate vision, tactile sensing, and real-time communication back to the central intelligence, allowing millisecond-level coordination across planetary or even interplanetary distances. Compression of centuries: Projects that would take humans centuries—megacities, Dyson-swarm collectors, planetary terraforming—unfold in decades or less because every design, supply-chain decision, and robotic action is planned and adjusted by a single, tireless digital entity.

3️⃣ Coordination as a Single Organism

One Mind, Many Bodies: The superintelligence can treat trillions of robots the way a human treats muscle fibers—commanding fleets, factories, or planetary infrastructure as a single coordinated system.

Continuous Learning Loop: Every robot’s sensor stream becomes training data. Improvements propagate instantly across the entire network.

4️⃣ Limits and Bottlenecks

Thermodynamics: Energy generation and heat dissipation set hard ceilings on manufacturing speed. Material Flow: Mining, transport, and assembly still require moving matter through space; no shortcut around basic physics. Speed vs. Human Perception: Even if physical tasks run only 10× faster than current industry, the planning behind them can iterate thousands of times in the same window—still a civilizational leap.

Where the Acceleration Does Show Up

Parallel Design & Simulation: It could design thousands of new robot types, materials, and manufacturing processes in minutes, run virtual stress-tests, and send blueprints straight to automated fabs.